The Ides of March is an instrumental song that opens the second studio album of Iron Maiden called Killers. This song is great as an opening, March is the month when spring starts in my side of the world, is always time for optimism. Ides of March also means 15 of March in the Roman calendar (and the day of the assassination of Julius Caesar). Enjoy the song here.

We have put our best to make this release and with important help of the Prowler community of cloud security engineers around the world, thank you all! Special thanks to the Prowler full time engineers @jfagoagas, @n4ch04 and @sergargar! (and Bruce, my dog) ❤️

Important changes in this version (read this!):

Now, if you have AWS Organizations and are scanning multiple accounts using the assume role functionality, Prowler can get your account details like Account Name, Email, ARN, Organization ID and Tags and add them to CSV and JSON output formats. More information and usage here.

New Features

- 1 New check for S3 buckets have ACLs enabled by @jeffmaley in #1023 :

7.172 [extra7172] Check if S3 buckets have ACLs enabled - s3 [Medium] - feat(metadata): Include account metadata in Prowler assessments by @toniblyx in #1049

Enhancements

- Add whitelist examples for Control Tower resources by @lorchda in #1013

- Skip packages with broken dependencies when upgrading system by @dlorch in #1009

- Docs: Improve check_sample examples, add general comments by @lazize in #1039

- Added timestamp to temp folders for secrets related checks by @sectoramen in #1041

- Make python3 default in Dockerfile by @sectoramen in #1043

- Docs(readme): Fix typo by @jfagoagas in #1072

Fixes

- Fix issue extra75 reports default SecurityGroups as unused #1001 by @jansepke in #1006

- Fix issue extra793 filtering out network LBs #1002 by @jansepke in #1007

- Fix formatting by @lorchda in #1012

- Fix docker references by @mike-stewart in #1018

- Fix(check32): filterName base64encoded to avoid space problems in filter names by @n4ch04 in #1020

- Fix: when prowler exits with a non-zero status, the remainder of the block is not executed by @lorchda in #1015

- Fix(extra7148): Error handling and include missing policy by @toniblyx in #1021

- Fix(extra760): Error handling by @lazize in #1025

- Fix(CODEOWNERS): Rename team by @jfagoagas in #1027

- Fix(include/outputs): Whitelist logic reformulated to exactly match input by @n4ch04 in #1029

- Fix CFN CodeBuild example by @mmuller88 in #1030

- Fix typo CodeBuild template by @dlorch in #1010

- Fix(extra736): Recover only Customer Managed KMS keys by @jfagoagas in #1036

- Fix(extra7141): Error handling and include missing policy by @lazize in #1024

- Fix(extra730): Handle invalid date formats checking ACM certificates by @jfagoagas in #1033

- Fix(check41/42): Added tcp protocol filter to query by @n4ch04 in #1035

- Fix(include/outputs):Rolling back whitelist checking to RE check by @n4ch04 in #1037

- Fix(extra758): Reduce API calls. Print correct instance state. by @lazize in #1057

- Fix: extra7167 Advanced Shield and CloudFront bug parsing None output without distributions by @NMuee in #1062

- Fix(extra776): Handle image tag commas and json output by @jfagoagas in #1063

- Fix(whitelist): Whitelist logic reformulated again by @n4ch04 in #1061

- Fix: Change lower case from bash variable expansion to tr by @lazize in #1064

- Fix(check_extra7161): fixed check title by @n4ch04 in #1068

- Fix(extra760): Improve error handling by @lazize in #1055

- Fix(check122): Error when policy name contains commas by @plarso in #1067

- Fix: Remove automatic PR labels by @jfagoagas in #1044

- Fix(ES): Improve AWS CLI query and add error handling for ElasticSearch/OpenSearch checks by @lazize in #1032

- Fix(extra771): jq fail when policy action is an array by @lazize in #1031

- Fix(extra765/776): Add right region to CSV if access is denied by @roman-mueller in #1045

- Fix: extra7167 Advanced Shield and CloudFront bug parsing None output without distributions by @NMuee in #1053

- Fix(filter-region): Support comma separated regions by @thetemplateblog in #1071

New Contributors

- @jansepke made their first contribution in #1006

- @lorchda made their first contribution in #1012

- @mike-stewart made their first contribution in #1018

- @n4ch04 made their first contribution in #1020

- @jeffmaley made their first contribution in #1023

- @roman-mueller made their first contribution in #1045

- @NMuee made their first contribution in #1053

- @plarso made their first contribution in #1067

- @thetemplateblog made their first contribution in #1071

- @sergargar made their first contribution in #1073

Full Changelog: 2.7.0…2.8.0

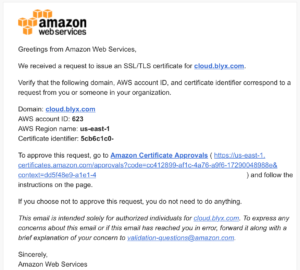

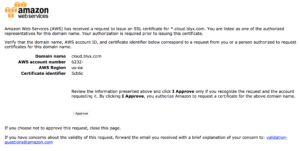

3. Once we have all DNS steps done, let’s create our wild card certificate with AWS Certificate Manager for

3. Once we have all DNS steps done, let’s create our wild card certificate with AWS Certificate Manager for

In order to give back to the Open Source community what we take from it (actually from the

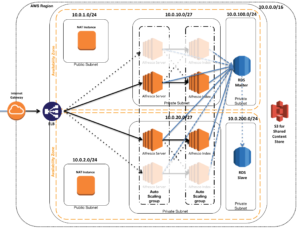

In order to give back to the Open Source community what we take from it (actually from the  Now, with this provided CloudFormation template you can deploy SecurityMonkey pretty much production ready in a couple of minutes.

Now, with this provided CloudFormation template you can deploy SecurityMonkey pretty much production ready in a couple of minutes.